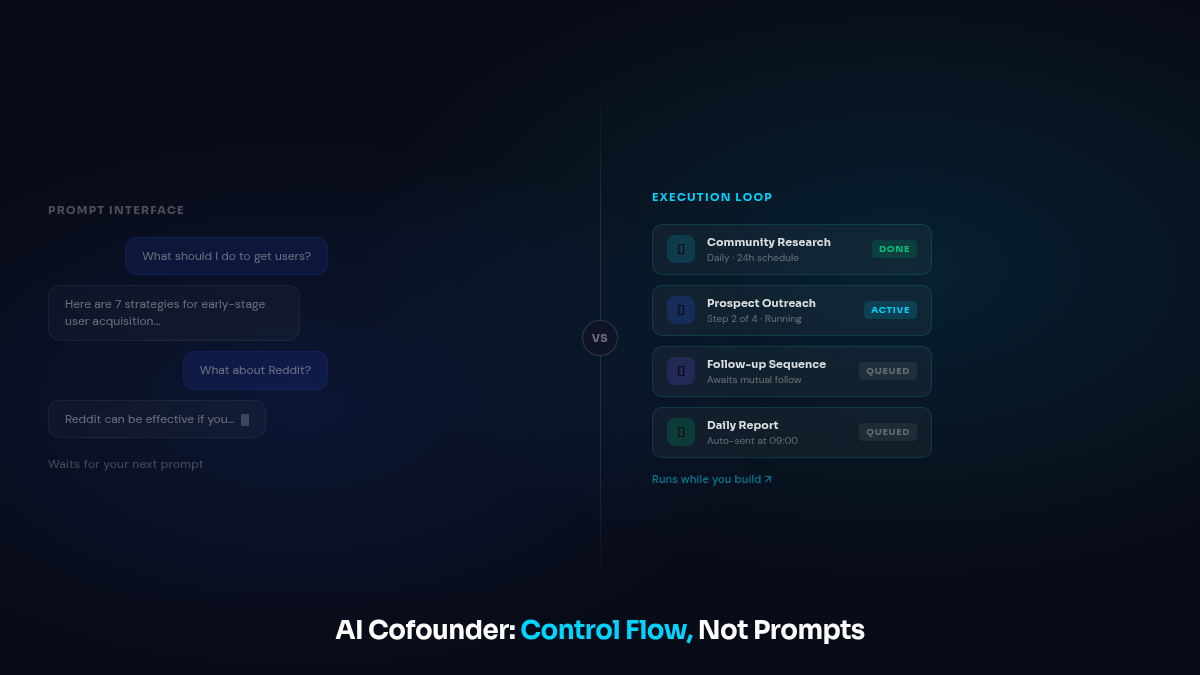

TL;DR: An AI cofounder is not a smarter chatbot. The difference that matters isn’t intelligence. It’s control flow: scheduled execution, owned domains, context that compounds. Most “AI cofounder” products are prompt interfaces. That’s not the same thing.

There’s a thread on Hacker News right now with 460+ upvotes. The title: “Agents Need Control Flow, Not More Prompts.” One comment near the top: “I spent six months writing better prompts to make agents useful. It didn’t work. The moment I added scheduling and structured outputs, everything changed.”

That gap, between prompting and control flow, is exactly why most products marketed as AI cofounders don’t deliver what their demos promise.

The demo problem

Most AI cofounder demos show the same thing: a chat interface. You type a question, the AI gives a thoughtful answer. Maybe it knows growth strategy. Maybe it’s trained on startup playbooks.

It looks capable. It is capable.

And it does nothing when you’re not typing at it.

That’s the problem. A chatbot is a query interface. Input in, output out. The frequency of its work matches the frequency of your prompts. Stop asking, nothing happens.

A cofounder doesn’t work that way. A cofounder has things they’re responsible for. They work on those things whether you check in or not. They report what happened, not a list of options to consider.

What “cofounder” actually implies

The word has a specific meaning outside the AI context.

A cofounder owns a domain, not just “helps with” it. They’re accountable for a chunk of the company’s execution. They make decisions within that domain without being asked, every day. They report back with results, not questions. And they close the feedback loop themselves, tracking performance and acting on it without you pulling the data first.

If your “cofounder” needs daily prompts to do anything, you have a capable assistant. That’s useful. But it’s a different job, and conflating the two leads to disappointment.

This isn’t a semantic argument. It changes the product architecture, what counts as success, and what actually makes you more productive.

The control flow problem (without the engineering jargon)

You don’t need to care about agent architecture to choose AI tools. But one distinction matters practically.

A prompt interface gives you output for every input. Nothing happens between your inputs. Nothing persists unless you explicitly maintain it.

A control flow system runs tasks on schedules. Outputs from step one become inputs for step two. State persists between runs. Context compounds. The loop runs without you triggering it.

Most AI cofounder products are the first. A cofounder, to function like one, needs to be the second.

One founder put it plainly: “With ChatGPT, I feel like I’m doing the work with a very smart sidekick. With CrossMind, I come back and there’s a log of things that happened while I was building.”

That’s the architectural gap in daily practice.

What agents with control flow actually do

We published a full task log earlier this year, 79 tasks in a single day across six work categories. Rather than repeat that, here’s what control flow looks like from the outside.

Community research runs on a 24-hour schedule. The agent scans Reddit, Twitter, and Indie Hackers for conversations matching each user’s product and ICP. A 38-minute run produces 20 specific Reddit threads and 15 target Twitter accounts, ranked by relevance. No one triggered this. It ran because it was scheduled.

Prospect outreach runs as a multi-step sequence. The X Drop Pipeline doesn’t start with a message. It starts with a public reply in a relevant thread, then a follow, then waits for a mutual follow, then sends a contextual DM. Four steps over multiple days. Across 20 automated pipeline runs: 103 DMs delivered, 33% reply rate, 6 verified signups tracked from DM to PostHog-confirmed registration.

Before this pipeline: 69 cold DMs, zero replies. The sequence is what changed the result. Not smarter copy.

Trial check-ins happen at Day 1, Day 3, and Day 7. Not because a human calendared them. Because they’re built into the execution architecture.

None of this requires a prompt. It runs. You check the output.

Three questions to cut through the marketing

Before trusting any tool that calls itself an AI cofounder, ask these:

1. What does it do when you’re not using it? If the answer is nothing, it’s a capable assistant. Useful for specific jobs, but not a cofounder. That distinction matters for what you should expect from it.

2. Does it own something end-to-end? Not “help with.” Own. It tracks performance in its domain, adjusts based on what it finds, and reports back without you asking. If you’re still the one checking whether outreach is converting and deciding to change strategy, you’re still doing that job yourself.

3. Does context accumulate? If every session starts fresh, you have a chat interface. If the agent knows who replied last week, which threads had traction, which sequences converted, and builds on that in the next run, that’s different. Context compounding is what makes the work get better over time.

Most “AI cofounder” products pass zero of these. Some pass one.

What this means if you’re evaluating AI tools

There are good AI tools that are prompt interfaces. They’re useful for research, writing, and quick tasks. They’re just not cofounders. The framing doesn’t fit, and buying into it sets you up for the wrong expectations.

The better question isn’t “is this smarter than ChatGPT?” It’s: what does it do when I’m not using it? What does it own? How does context about my specific product accumulate over time?

We track source attribution for every CrossMind user. Of our 20 non-Ivan users with full attribution data, the ones getting the most output are not the most active in chat. They’re the ones who set up the execution loops and checked the logs. They didn’t do less work. They did different work.

Jake found CrossMind after 10 failed startups. His read: every one had the same pattern. He could build. Distribution was always the wall. What he needed wasn’t advice about the wall. He needed something already working on it while he was building.

That’s the job. Ownership, execution, compounding context. Not a better chat interface.

CrossMind is built on scheduled execution, owned domains, and compounding context. The onboarding starts with your product URL and takes about 40 minutes to produce the first output: a community map, specific Reddit threads, and target Twitter accounts for your ICP.